Affordable AI ascends: a newsletter deep dive into the latest Low-Cost Language Model wonders

The PoorGPU guy Newsletter 2024 week 2 - All the incredible new projects and improvements around affordable LLMs

Move over, mega-models!

The spotlight is shifting, and affordable LLMs, once relegated to the sidelines, are taking center stage with a chorus of incredible innovations.

This newsletter is your backstage pass to this exciting revolution, where smaller models are proving that big things come in budget-friendly packages.

Forget the days of sky-high cloud bills and exclusive access for tech giants. Affordable LLMs are democratizing AI, making this powerful technology accessible to businesses of all sizes and even individual developers. From code generation to customer service chatbots, the possibilities are endless, and the latest advancements are nothing short of mind-blowing.

Lightweight champions

Imagine tiny titans like Flan-T5 and Microsoft Phi-2, packing a punch with just a fraction of the parameters of their behemoth counterparts. These nimble minds are rewriting the efficiency playbook, running seamlessly on even modest hardware and delivering impressive results without breaking the bank.

But it's not just about cost savings – these models are pushing the boundaries of what's possible with their focus on easy explainable parameters, customization, and real-world application.

The latest Champion is indeed Tinyvicuna-1B: as part of this newsletter you can read the Medium article for free

A brand new start?

Ok, but what if you are a total beginner?

Don’t worry, I got you covered. You can start your explorations with this article

So, buckle up, fellow AI enthusiasts, as we delve into the fascinating world of affordable LLMs. We'll explore groundbreaking new projects, uncover hidden gems in the open-source landscape, and get insider tips on how to harness the power of these pocket-sized powerhouses for your own projects. Get ready to witness the democratization of AI, where everyone can join the chorus of innovation and experience the magic of language models firsthand. The future of affordable AI is looking brighter than ever!

News from the Big AI community

Encoders encore

Encoder models are not dead! I would say that they are still a shining star, but they are underestimated. MosaicML has recently started to modernize and update BERT.

Although BERT-style encoder models are heavily used in NLP research, many researchers do not pretrain their own BERTs from scratch due to the high cost of training. In the past half-decade since BERT first rose to prominence, many advances have been made with other transformer architectures and training configurations that have yet to be systematically incorporated into BERT.

MosaciML introduces MosaicBERT, a BERT-style encoder architecture and training recipe that is empirically optimized for fast pretraining. This efficient architecture incorporates the new techniques of FlashAttention, Attention with Linear Biases (ALiBi), Gated Linear Units (GLU), a module to dynamically remove padded tokens, and low precision LayerNorm into the classic transformer encoder block.

You can read here the official paper.

You can check the Repo here.

RAG is not dead (yet?)

There are signals that the shift will soon move from external tools (llamaIndex, LangChain) and having LLM-RAG centric models, able to leverage very long context windows. You can read few ideas from this amazing article by Eric Risco on Medium.

However, even if RAG has flaws… we are not yet there. And honestly I prefer the idea of Agnostic LLM, where the DATA processing is completely segregated from the LLM.

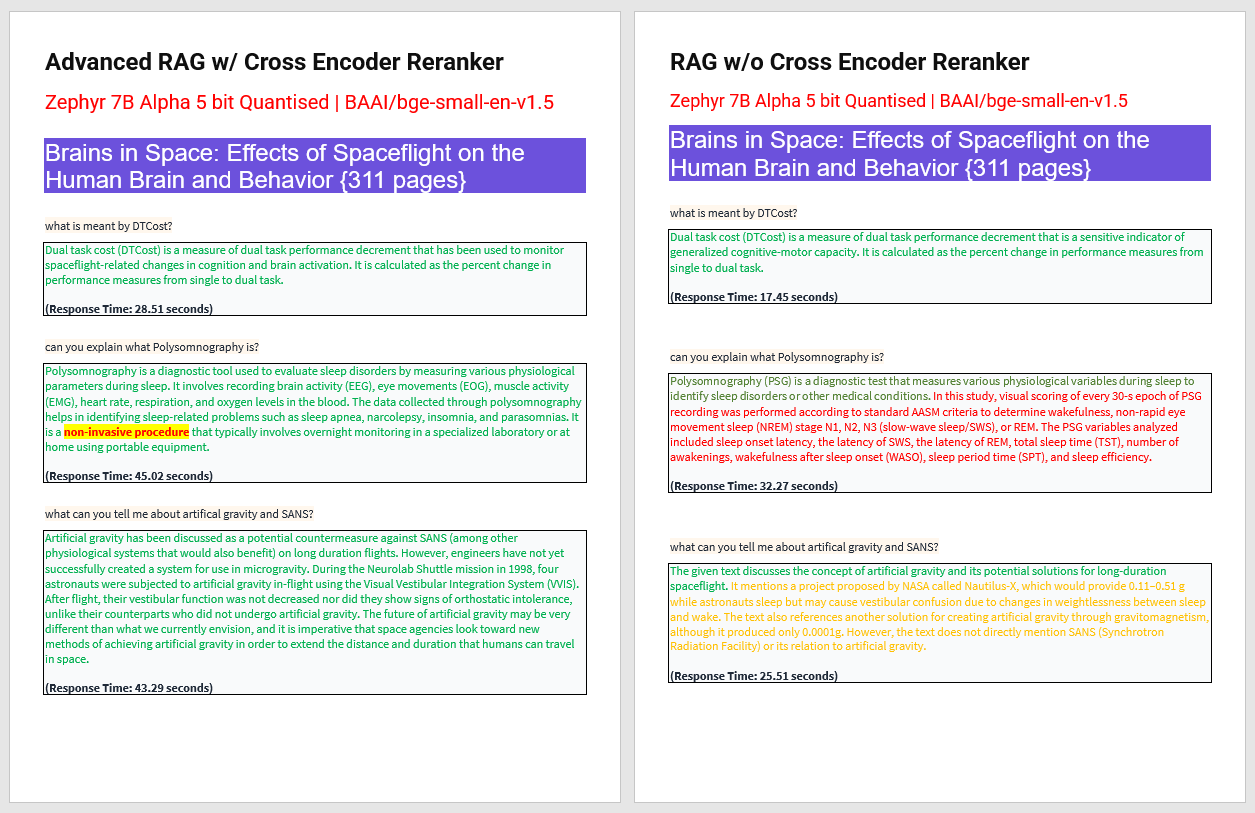

Do you want to see one the great strategies to work with RAG? Here neat qualitative example by @MountainMicky highlighting why you should always include re-ranking in your advanced RAG pipeline

✅ For simple queries, naive RAG is fine.

⚠️ For advanced queries though, you need a re-ranker to return precise relevant context to answer the question and weed out the fluff. Best of all this repo features fully open-source LLM (zephyr), embedding (BGE), and reranking models, meaning you can get setup and running on your machine in no time.

You can check the Repo in Github here.

Garbage In Garbage Out

You may already know this, but in any project involving data handling… the data must be of high quality.

Working with ML this is a given, but it is often overlooked in AI generation.

For these reasons many open-source and proprietary groups are working on better PDF parsing methods. Actually there is no plug-and-play product out there. if you try to use Langchain and load the pdf you will get the text, but no structure, no relations and a lot of garbage.

JPMorgan presents DocGraphLM to the world to tackle this long known issue. You can read more on the paper page.

Advances in Visually Rich Document Understanding (VrDU) have enabled information extraction and question answering over documents with complex layouts. Two tropes of architectures have emerged:

transformer-based models inspired by LLMs,

and Graph Neural Networks.

In their paper, JPMorgan introduces DocGraphLM, a novel framework that combines pre-trained language models with graph semantics. To achieve this, they propose

a joint encoder architecture to represent documents, and

a novel link prediction approach to reconstruct document graphs.

DocGraphLM predicts both directions and distances between nodes using a convergent joint loss function that prioritizes neighborhood restoration and downweighs distant node detection.

This is only the start!

Hope you will find all of this useful. Feel free to contact me on Medium.

I am using Substack only for the newsletter. Here every week I am giving free links to my paid articles on Medium.

Follow me and Read my latest articles https://medium.com/@fabio.matricardi