Alibaba Cloud's Qwen2.5 - unprecedented performance and versatility

A New Era of natural language understanding and generation

Hi there,

I broke my usual routine because Qwen2.5 deserves a special spot with a Newsletter.

If you followed me so far you may have noticed that for long time I have been claiming the Qwen family as the best Open-Source models around. Not to mention that they are really good even in their small size versions (500 M parameters…).

And when a Small Model is good, it means that the data, training and pipeline quality deserve attention and claps.

So let’s check them out!

Qwen2.5 family innovation

Qwen is the large language model and large multimodal model series of the Qwen Team, Alibaba Group. Just yesterday the large language models have been upgraded to Qwen2.5.

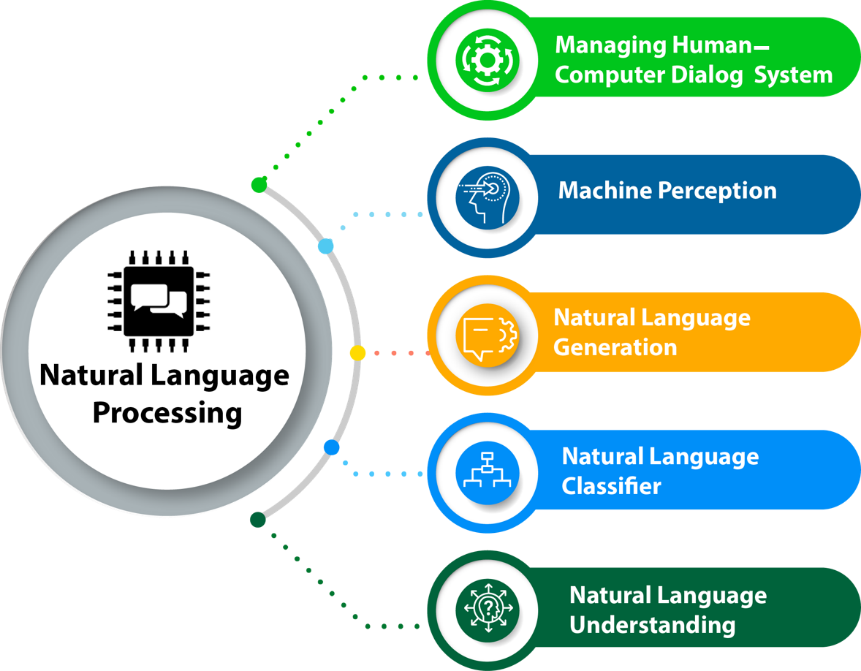

Both language models and multimodal models are pretrained on large-scale multilingual and multimodal data and post-trained on quality data for aligning to human preferences. Qwen is capable of natural language understanding, text generation, vision understanding, audio understanding, tool use, role play, playing as AI agent, etc.

With the recent release of Qwen2.5 and additional open-source model releases Alibaba Cloud continues its leadership position to meet rising AI demands from enterprise users. Since June last year, the Qwen family has attracted over 90,000 deployments via Model Studio in various industries including consumer electronics, automobiles, gaming, and more.

Qwen also expanded its reach with new models such as Qwen1.5–110B and CodeQwen1.5–7B on platforms like Hugging Face, showcasing Alibaba’s commitment to open-source AI development.

Declared scope, use cases and models

In the past three months since Qwen2’s release, numerous developers have built new models on the Qwen2 language models, providing valuable feedback to the entire community, but also to Alibaba Cloud.

During this period, we have focused on creating smarter and more knowledgeable language models. Today, we are excited to introduce the latest addition to the Qwen family: Qwen2.5.

Their claims come with facts about the new family of models:

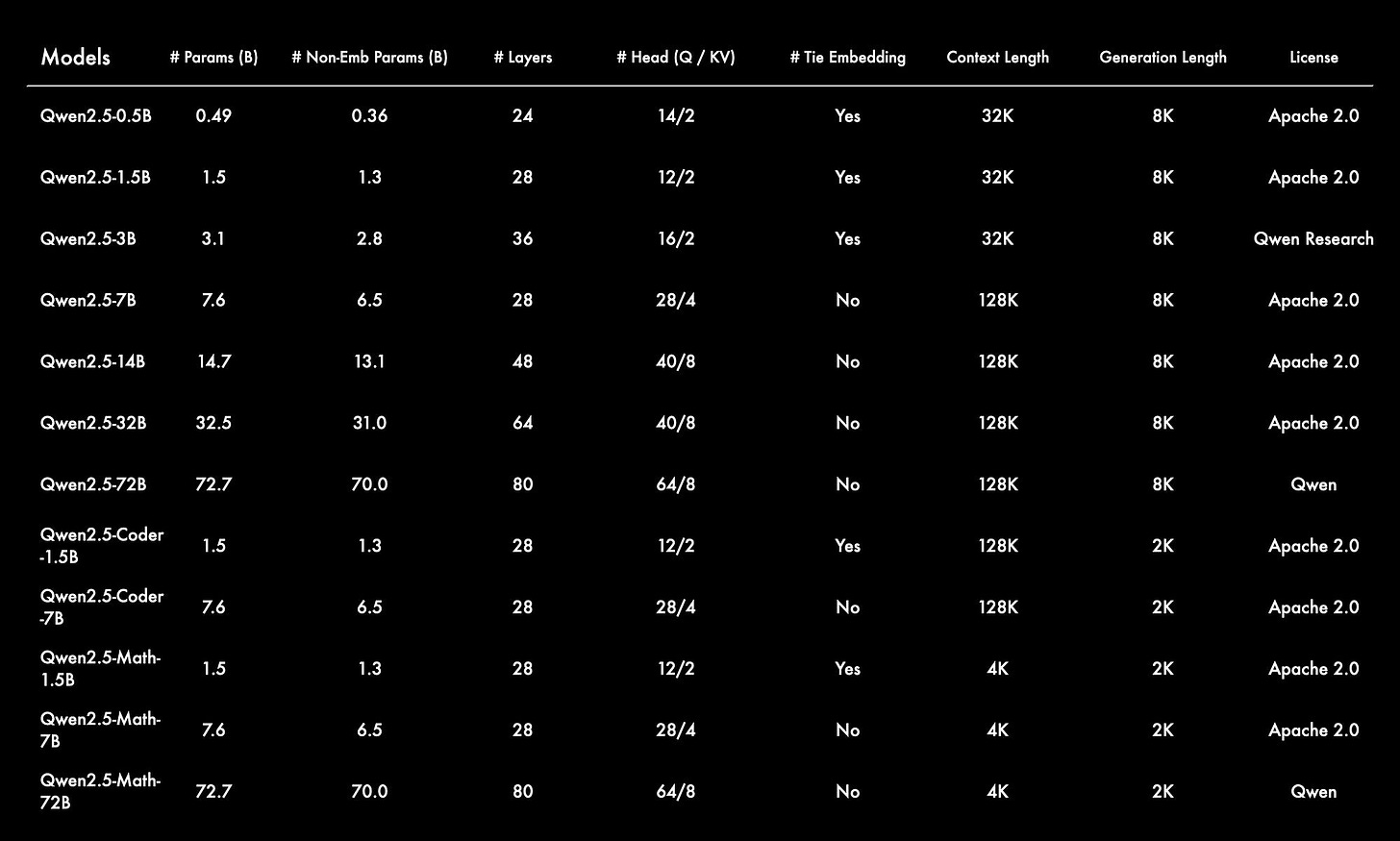

Dense, easy-to-use, decoder-only language models, available in 0.5B, 1.5B, 3B, 7B, 14B, 32B, and 72B sizes, and base and instruct variants.

Pretrained on our latest large-scale dataset, encompassing up to 18T tokens.

Significant improvements in instruction following, generating long texts (over 8K tokens), understanding structured data (e.g, tables), and generating structured outputs especially JSON.

More resilient to the diversity of system prompts, enhancing role-play implementation and condition-setting for chatbots.

Context length support up to 128K tokens and can generate up to 8K tokens.

Multilingual support for over 29 languages, including Chinese, English, French, Spanish, Portuguese, German, Italian, Russian, Japanese, Korean, Vietnamese, Thai, Arabic, and more.

Qwen2.5: a party of Foundation models

As announced on the official blog press release on September 19, 2024:

Today, we are excited to introduce the latest addition to the Qwen family: Qwen2.5. We are announcing what might be the largest opensource release in history! Let’s get the party started! Our latest release features the LLMs Qwen2.5, along with specialized models for coding, Qwen2.5-Coder, and mathematics, Qwen2.5-Math.

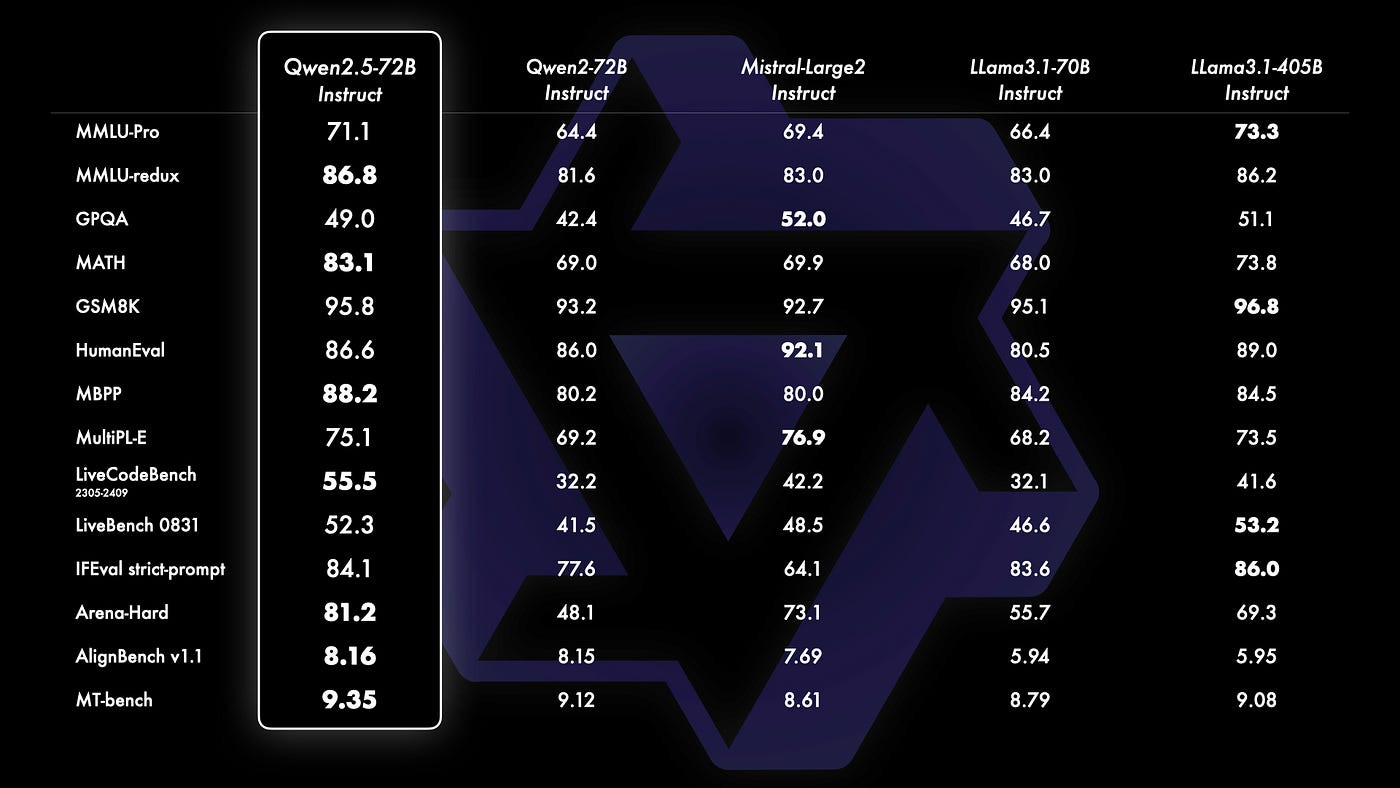

To showcase Qwen2.5’s capabilities, the Alibaba Cloud team bench-marked their largest open-source model, Qwen2.5–72B — a 72B-parameter dense decoder-only language model — against leading open-source models like Llama-3.1–70B and Mistral-Large-V2.

For your info, there is already a crazy (I mean genius) developer Gavin Li (Founder of Anima AI, former Airbnb, Alibaba AI senior leader. Unicorn's AI advisor. Author of AirLLM…) that managed to run the King 72B model with only 4Gb of VRAM!

You can read more on his Medium article called Breakthrough: Running the New King of Open-Source LLMs QWen2.5 on an Ancient 4GB GPU

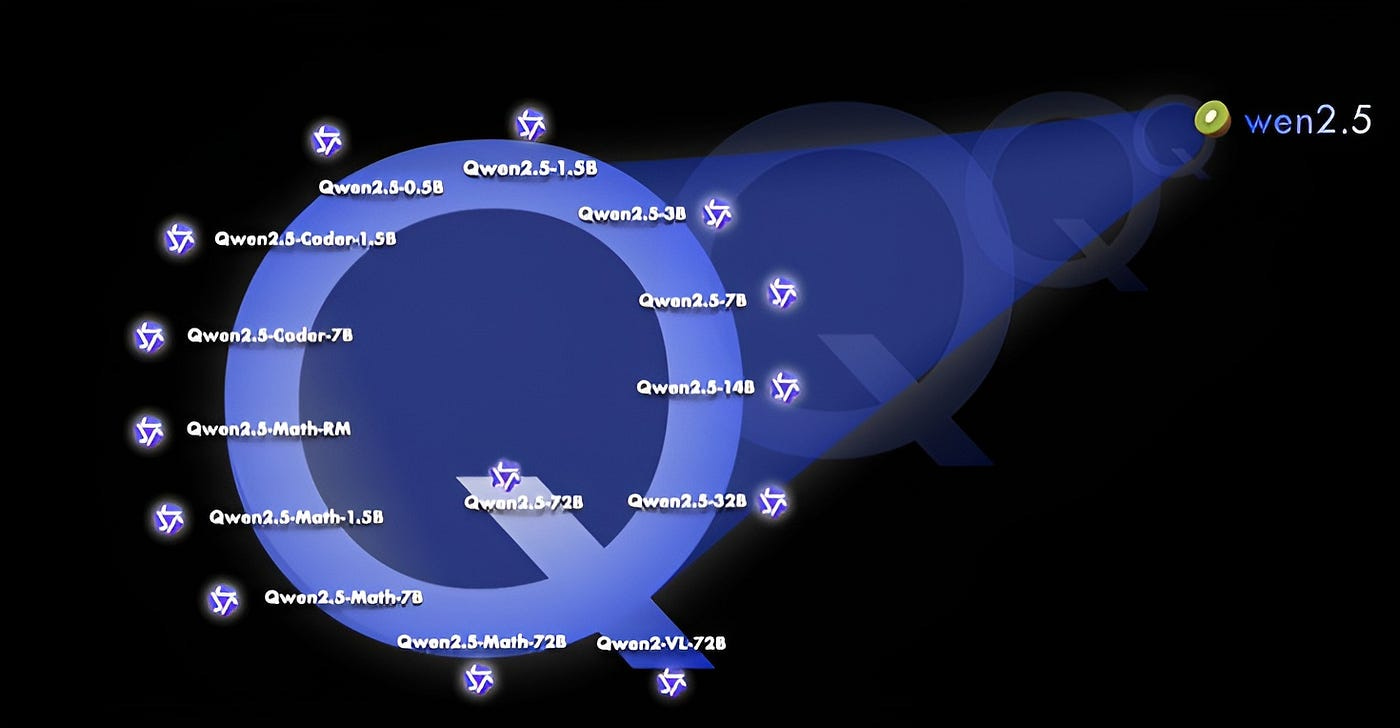

All open-weight models are dense, decoder-only language models, available in various sizes, including:

Qwen2.5: 0.5B, 1.5B, 3B, 7B, 14B, 32B, and 72B

Qwen2.5-Coder: 1.5B, 7B, and 32B on the way

Qwen2.5-Math: 1.5B, 7B, and 72B.

All these open-source models, except for the 3B and 72B variants, are licensed under Apache 2.0. You can find the license files in the respective Hugging Face repositories.

But this is not all!

…we have also open-sourced the Qwen2-VL-72B, which features performance enhancements compared to last month’s release.

In terms of Qwen2.5, the language models, all models are pretrained on our latest large-scale dataset, encompassing up to 18 trillion tokens. Compared to Qwen2, Qwen2.5 has acquired significantly more knowledge (MMLU: 85+) and has greatly improved capabilities in coding (HumanEval 85+) and mathematics (MATH 80+). Additionally, the new models achieve significant improvements in instruction following, generating long texts (over 8K tokens), understanding structured data (e.g, tables), and generating structured outputs especially JSON.

Qwen-Coder is the new kid of the family

The specialized expert language models, namely Qwen2.5-Coder for coding and Qwen2.5-Math for mathematics, have undergone substantial enhancements compared to their predecessors, CodeQwen1.5 and Qwen2-Math. Specifically, Qwen2.5-Coder has been trained on 5.5 trillion tokens of code-related data, enabling even smaller coding-specific models to deliver competitive performance against larger language models on coding evaluation benchmarks. Meanwhile, Qwen2.5-Math supports both Chinese and English and incorporates various reasoning methods, including Chain-of-Thought (CoT), Program-of-Thought (PoT), and Tool-Integrated Reasoning (TIR).

Expanding Reach through Open-Source Contributions

As part of its continuous commitment to the broader community, Alibaba Cloud has made additional steps in releasing various sizes and variants of Qwen models. This includes:

1. Qwen 0.5 billion parameters, a foundational version suitable for more traditional applications.

2. A compact but potent model tailored specifically for gaming development: Qwen-VL (vision-language) optimized with high capabilities.

These advancements demonstrate Alibaba’s commitment to open-source AI, sharing not only the base versions of Qwen but also significant improvements and new models that are targeting directly the enterprise needs while enhancing their ability to innovate rapidly.

This aligns closely with a strategic vision where continuous contributions benefit both community members and its own clients as they seek innovative applications across multiple sectors.

Bridging Industries through cutting-edge AI solutions

To showcase the breadth of Qwen’s capabilities in real-world scenarios, Alibaba Cloud has been at the forefront:

1. Xiaomi: the Company is integrating Alibaba’s models into their AI assistant, Xiao Ai, and deploying it within Xiaomi smartphones and electric vehicles to create enhanced features like car infotainment image generation via voice commands.

2. Perfect World Games: the integration of Qwen in game development has led to innovative applications including improving plot resolution through dialogue dynamics and real-time content management.

The collaborations between Alibaba Cloud models and various industries have not only enriched the user experience but also facilitated greater opportunities for growth within these sectors, pushing boundaries that would otherwise be unimaginable without AI advancements.

Future outlook: continued Open-Sourcing

In future plans, Alibaba has also expressed their commitment to ongoing open-source contributions by releasing smaller variants of Qwen for developers across different sectors. In reality in the Hugging Face community many users have started to fine-tune Qwen for dedicated tasks: I wrote an example in my article on NuExtract: the smaller variant of this model family is based on Qwen2–0.5b!

These developments in AI technology and model advancements are crucial steps towards leveraging the full potential of large language models like Qwen within a variety of industries. With robust adoption rates continuing to grow rapidly through Model Studio, it is clear that Alibaba Cloud has been a pioneer industry leader not only by providing advanced tools but also promoting innovation across enterprises.

On my side, my outlook are to proceed with internal testing on the new models, specifically on the small ones, up to 3B.

An here comes the gift from my newsletter. OpenVINO is showing high flexibility to run LLM on Intel hardware. In this article I show you how to do it also with Qwen2.5. Believe it or not, after just few days, we already have on Hugging Face the 1.5B-instruct version compiled for OpenVINO. And you can learn how to do it yourself with this article, for free.

This is only the start!

Hope you will find all of this useful. Feel free to contact me on Medium.

I am using Substack only for the newsletter. Here every week I am giving free links to my paid articles on Medium.

Follow me and Read my latest articles https://medium.com/@fabio.matricardi