Can we prune away the bloat? New techniques for efficient LLMs

Let's check out together the exciting world of Generative AI model optimization!

Dear AI Enthusiasts, and Poor GPU users, like me, are you curious about what’s going on the Generative AI community?

The release of Claude 3.5 Sonnet 2 weeks ago, and the artifacts introduction, opened the debate to real capabilities of LLMs. Specifically it looks like Generative AI reached a new State of the art in terms of multimedia understanding and reasoning: the model is able to simulate code and instructions into different modalities, providing an engaging experience for the users.

But again, after widening once more the gap between proprietary/closed models and Open source ones, who can afford to run in-house such behemoths?

Honey, I Shrunk the… AI

Have you ever wondered if the massive Large Language Models (LLMs) we've grown accustomed to could be trimmed down without losing their smarts? Well, grab your popcorn, because today's blog post dives into the exciting world of layer redundancy and how we're redefining efficiency in the realm of AI.

Let's start with a provocative thought: almost half of a model's layers are just taking up space. That's right! Recent studies have revealed that we can slash layers without sacrificing performance. How cool is that?

But first, let's set the scene. LLMs have exploded in popularity, becoming indispensable tools in our daily lives. They've grown from mere research projects to market giants, boasting billions of parameters. With this growth comes a hefty price tag - not just in terms of dollars but also in energy consumption and environmental impact.

Enter the Layer Layoff (this is for you AI!)

Researchers have discovered that many layers in LLMs are redundant, meaning they don't contribute much to the model's overall performance. This revelation opens up a world of possibilities for a complete restyle of these models and for making them more sustainable.

So, how do we go about this layer pruning? Let's break it down:

Quantization: we are transforming bulky float32 weights into lightweight integers. This technique reduces the computational load and memory footprint, making models leaner and meaner. These are my beloved GGUF 😍!

Pruning: It's time to play "Operation" with those unnecessary weights. Pruning allows us to remove the dead weight without compromising the model's performance. Basically we give aur AI a smart diet!

Knowledge Distillation: Ever heard of the saying, "Less is more"? Knowledge distillation takes the essence of a large model and condenses it into a smaller, more specialized version. We take a smaller part of it that's just as smart but easier to handle.

But wait, there's more!

Innovative researchers are exploring ways to optimize model architecture itself. They're designing compact models that replace resource-hungry components like self-attention with more efficient alternatives. And let's not forget dynamic networks, where only relevant parts spring into action when needed. Talk about being eco-friendly!

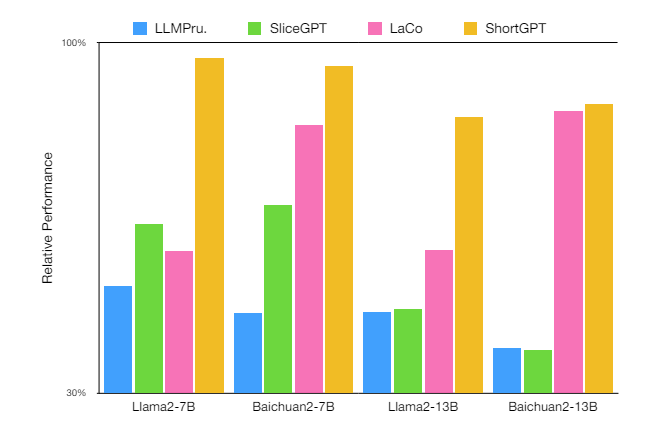

One groundbreaking study suggests that up to 50% of the layers in models like LLaMA-2 70B can be removed without causing a performance dip. That's right, folks – we're talking about a potential 50% reduction in layers! To ensure smooth sailing after such extensive pruning, a bit of fine-tuning (using QLoRA) is necessary to heal any discrepancies.

Here's the kicker: this isn't just a one-off phenomenon. Models across the board, from Qwen to Mistral, share this redundancy. Are we discovering a universal truth in the AI universe?

Train More, stay Small

You may have heard the term "Chinchilla Law": it isn't a formal law in the field of Artificial Intelligence, but rather a concept or principle inspired by a research paper published by DeepMind in 2022. The paper, titled "Training Compute-Optimal Large Language Models" introduced the Chinchilla model, which challenged the prevailing wisdom in the AI community regarding the relationship between model size and dataset size.

Traditionally, there has been a trend in AI research towards building increasingly larger models, often with billions of parameters, to improve performance on various tasks. However, the Chinchilla model suggested that these large models might not be utilizing their capacity effectively due to being undertrained—meaning they are not trained on enough data relative to their size.

The key insight from the Chinchilla model is that increasing the size of the training dataset can lead to more significant performance gains compared to simply increasing the model size.

There is a really ambitious and interesting research proposal from A*STAR research on Super Tiny Language Models (STLM) with only 10M, 50M, and 100M parameters. They aim to achieve competitive performance compared to models in the size range of 3B-7B parameters on GSM8K, MMLU, and LMSYS Chatbot Arena. Their starting point is a base model is a 10-layer llama2 (which gives random guess performance after training). They plan to explore more in two directions:

Model-level: weight tying, byte-level tokenization with a pooling mechanism, mixture of depths, layerskip, and text thought prediction.

Data-level: high-quality data selection and knowledge distillation.

“Super Tiny Language Models (STLMs), which aim to deliver high performance with significantly reduced parameter counts. We explore innovative techniques such as byte-level tokenization with a pooling mechanism, weight tying, and efficient training strategies… We will target models with 10M, 50M, and 100M parameters. Our ultimate goal is to make high-performance language models more accessible and practical for a wide range of applications” - from source

In other words, it's more effective to train a smaller model on a larger dataset than to train a larger model on a smaller dataset. This idea can be summarized as the Chinchilla Law, which essentially states that the performance of machine learning models improves more from increasing the quantity of training data than from increasing the model size, assuming the model is already relatively large.

Conclusions… for now

The implications of these researches are profound. If we carefully eliminate layers, we create more compact models that can run on a single, commercially available GPU or even CPU. This means AI becomes accessible to a wider audience, breaking down barriers and fostering innovation.

Not to mention that larger models prove to be more resilient to pruning, indicating a wealth of redundancy waiting to be tapped. It's a win-win situation: reduced memory footprint, faster inference times, and a greener planet.

So, what does this all mean for the future of AI?

I mean, while we continue to refine our understanding of layer redundancy, we'll likely witness a paradigm shift in LLM design. Smaller, more efficient models will finally open the way for widespread adoption and democratization of AI technologies.

The future of AI is in our hands too... This article is my gift for the week!

This is only the start!

Hope you will find all of this useful. Feel free to contact me on Medium.

I am using Substack only for the newsletter. Here every week I am giving free links to my paid articles on Medium.

Follow me and Read my latest articles https://medium.com/@fabio.matricardi