Meta new contribution to AI advancement

Public release of new AI research Models to accelerate innovation at scale

Meta's Fundamental AI Research (FAIR) team has been a driving force in advancing the state of AI through open research for over a decade. Their commitment to openness, collaboration, excellence, and scale has led to significant breakthroughs in the field, and their latest releases showcase their dedication to the continued growth and development of an open AI ecosystem.

Few days ago Meta ai publicly shared their research models and datasets.

The reason, beyond the usual suspects is quite simple: FAIR hopes to inspire the global AI community to explore and discover new ways to apply AI at scale.

The Fundamental AI Research (FAIR) team at Meta seeks to support our fundamental understanding in both new and existing domains, covering the full spectrum of topics related to AI, with the mission of advancing the state-of-the-art of AI through open research for the benefit of all.

FAIR is the team behind the curtains, working on Generative AI technologies and Machine Learning models… as we speak!

And these are the 4 new Models, shared publicly with the AI community:

🦎 Meta Chameleon 7B & 34B language models that support mixed-modal input and text-only outputs.

🪙Meta Multi-Token Prediction Pretrained Language Models for code completion using Multi-Token Prediction.

🎼Meta JASCO Generative text-to-music models capable of accepting various conditioning inputs for greater controllability. Paper available today with a pretrained model coming soon.

🗣️Meta AudioSeal An audio watermarking model that we believe is the first designed specifically for the localized detection of AI-generated speech, available under a commercial license.

🗣📝Additional RAI artifacts Including research, data and code to measure and improve the representation of geographical and cultural preferences and diversity in AI systems.

We believe that access to state-of-the-art AI creates opportunities for everyone — not just a small handful of Big Tech companies. We’re excited to share this work and to see how the community learns, iterates and builds using this technology.

What about the License agreements?

For everyone, ready to saddle up and start dreaming about how to make profit out of them… well stop dreaming. Here an extract from the Chameleon license agreement:

License Rights and Redistribution. Subject to Your compliance with the terms and conditions of this Agreement, Meta hereby grants you the following:

Grant of Rights. You are hereby granted a non-exclusive, worldwide, non-transferable and royalty-free limited license under Meta’s intellectual property or other rights owned by Meta embodied in the Chameleon Materials to use, reproduce, distribute, copy, create derivative works of, and make modifications to the Chameleon Materials solely for Noncommercial Research Uses.

so it is clear what the purpose is guys…

All the details here https://ai.meta.com/blog/meta-fair-research-new-releases/

Why Meta can do this?

One of the key benefits of having a private company like Meta share their research models is the sheer size and resources at their disposal. With a massive workforce and infrastructure, Meta is capable of achieving results that universities and research labs may not be able to match.

Meta is uniquely poised to solve AI’s biggest problems — not many companies have the resources or capacity to make the investments we have in software, hardware, and infrastructure to weave learnings from our research into products that billions of people can benefit from. FAIR is a critical piece to Meta’s success, and one of the only groups in the world with all the prerequisites for delivering true breakthroughs: some of the brightest minds in the industry, a culture of openness, and most importantly: the freedom to conduct exploratory research. This freedom has helped us stay agile and contribute to building the future of social connection. - Joelle Pineau - VP, AI Research

While academic institutions can provide valuable insights and expertise, they often lack the resources necessary to conduct large-scale research projects. Meta's ability to invest heavily in AI research and development allows them to push the boundaries of what is possible and make significant contributions to the field.

But what is Meta's Fundamental AI Research (FAIR)?

Meta's Fundamental AI Research (FAIR) team, founded in 2013, has made significant strides in Artificial Intelligence over the past decade.

Initially assembled by Mark Zuckerberg and Yann LeCun, FAIR has attracted top-tier researchers to work on challenges in AI, leading to breakthroughs in areas like object detection and machine translation.

The team has pioneered techniques for unsupervised machine translation, introduced models for translation across 100 languages without reliance on English, and expanded text-to-speech and speech-to-text technology to over 1,000 languages.

FAIR collaborates with the broader research community, Meta's product teams, and external partners to share datasets, tasks, and competitions.

In 2023, FAIR has had a year of substantial research impact, with the release of Llama, an open pre-trained large language model, and other state-of-the-art models. Their work has been recognized with best paper awards at major AI conferences and has been featured widely in media.

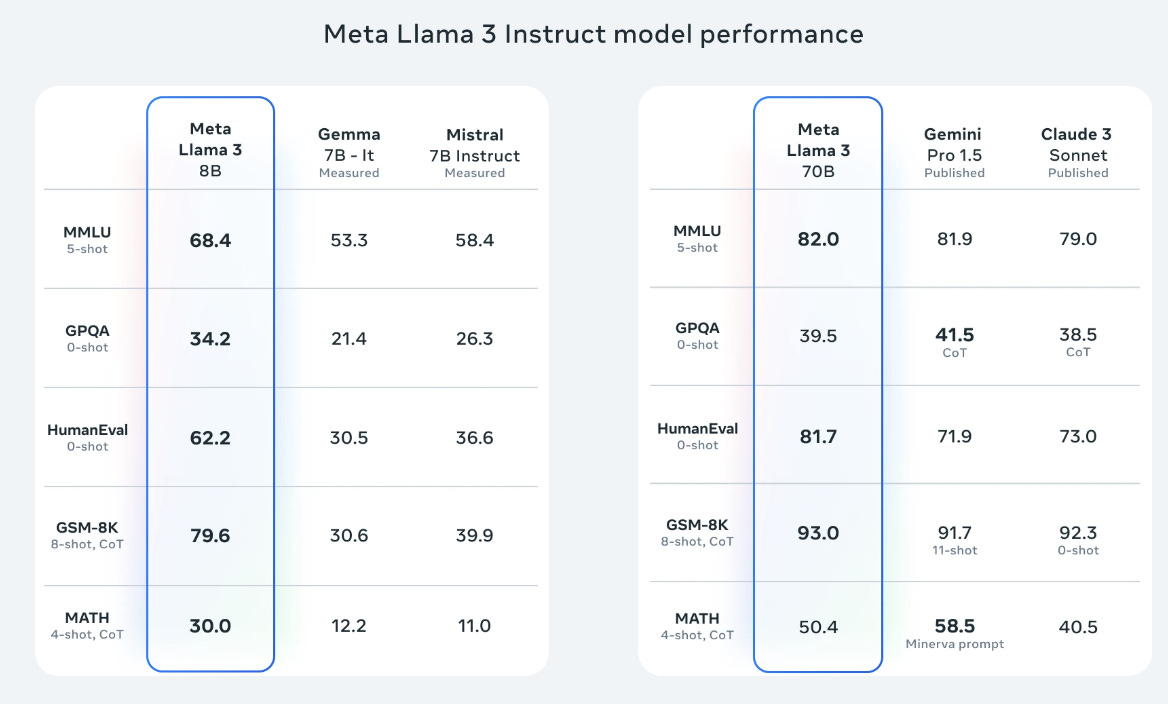

On April 18, 2024, Meta AI unveiled Meta Llama 3, announced as the most advanced open-source large language model (LLM) to date.

The initial release comprises two models with 8B and 70B parameters, demonstrating state-of-the-art performance on various benchmarks and enhanced reasoning capabilities. Meta AI aims to make Llama 3 multilingual, multimodal, and improve its context window and overall performance.

The models are based on a standard transformer architecture, trained on a vast dataset of over 15 trillion tokens, and fine-tuned using techniques like supervised fine-tuning, rejection sampling, and direct preference optimization.

“AI agent workflows will drive massive AI progress this year” — Andrew Ng

Agentic Outlook

Looking ahead, FAIR anticipates a future where AI progresses by integrating various capabilities, featuring foundation models with general abilities and world models for reasoning and planning. FAIR is committed to responsible AI development, aiming to set high standards of quality and responsibility through open science, contributing to safer, more robust, equitable, and transparent AI solutions.

Llama3-8B from the beginning has been sized to be agentic ready. In fact, while larger models can match the performance of the ones with less training compute, smaller models like Llama3-8B are generally preferred because they are much more efficient during inference.

A recent new research from Meta AI, Allen AI, and the University of Washington tackles one of the most important problems in LLM reasoning.

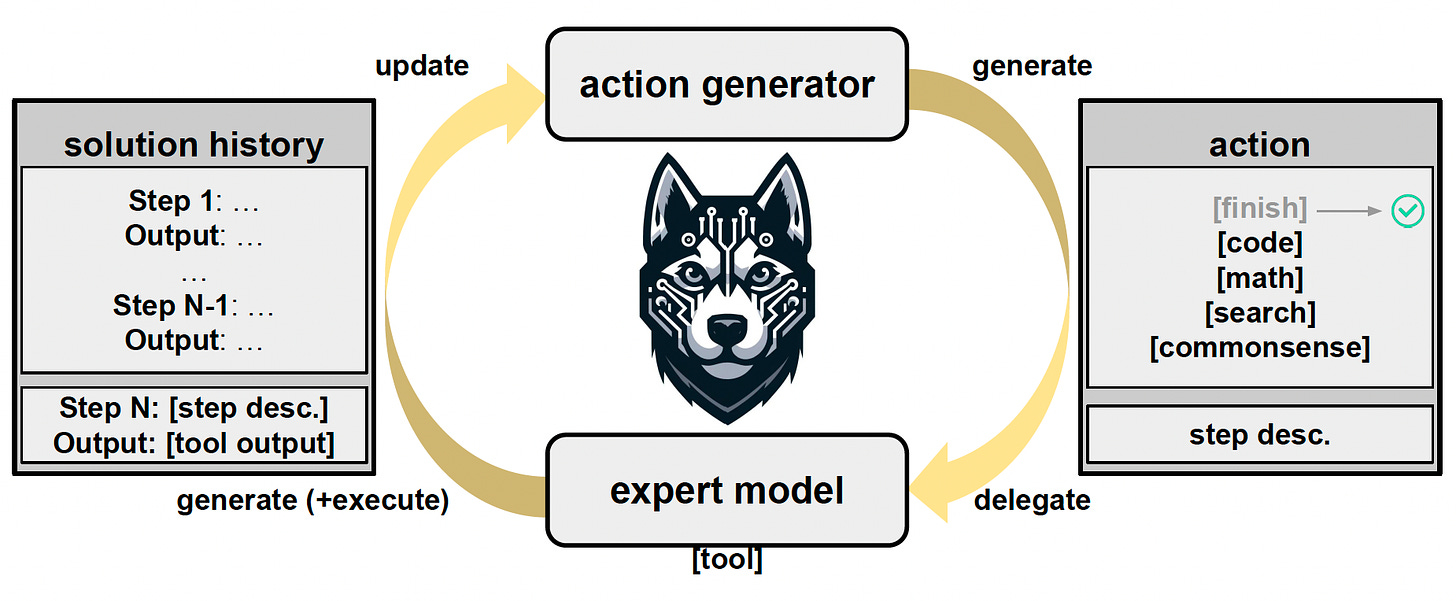

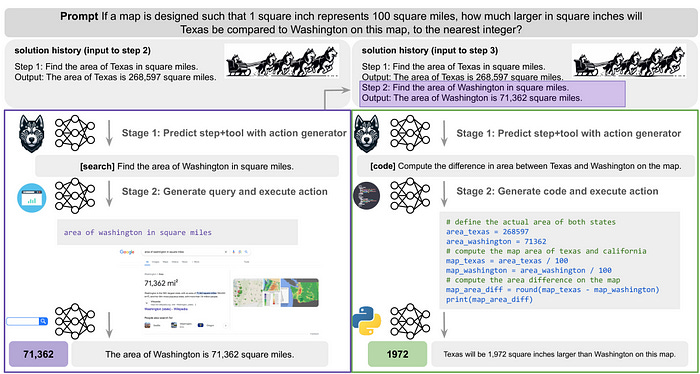

The paper presents HUSKY, a holistic, open-source language agent that learns to reason over a unified action space to address a diverse set of complex tasks involving numerical, tabular, and knowledge-based reasoning. Husky iterates between two stages:

generating the next action to take towards solving a given task and

executing the action using expert models and updating the current solution state.

This approach allows HUSKY to function like a modern version of classical planning systems, using large language models (LLMs) to optimize performance.

The future of AI is agents oriented. And here the gift for you of this week: my free article on Medium about how to link web-search as a tool to your free local AI Agents.

This is only the start!

Hope you will find all of this useful. Feel free to contact me on Medium.

I am using Substack only for the newsletter. Here every week I am giving free links to my paid articles on Medium.

Follow me and Read my latest articles https://medium.com/@fabio.matricardi