Needle in a Haystack and Small Language Models

Giant with 1 Million token context has been released - But is it context all you need?

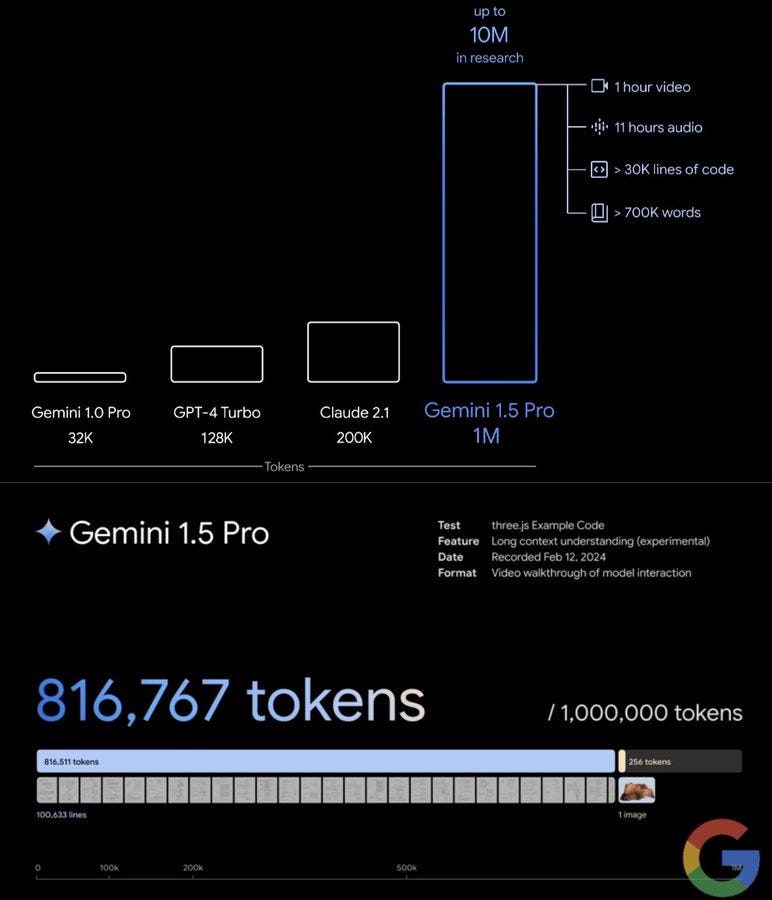

The past week has been hectic, at least a little more than usual. Google announced the release of Gemini 1.5 , OpenAI released SORA his brand new jaw-dropping AI video generator and StabilityAI announced Stable Diffusion 3, an image generator that can finally render text.

In the midst of this giants announcements, Google also released 2 smaller models, completely free for commercial use.

Today, we’re excited to introduce a new generation of open models from Google to assist developers and researchers in building AI responsibly.

Gemma is a family of lightweight, state-of-the-art open models built from the same research and technology used to create the Gemini models. Developed by Google DeepMind and other teams across Google, Gemma is inspired by Gemini, and the name reflects the Latin gemma, meaning “precious stone.”

Gemma is available worldwide, starting today. Here are the key details to know:

We’re releasing model weights in two sizes: Gemma 2B and Gemma 7B. Each size is released with pre-trained and instruction-tuned variants.

Terms of use permit responsible commercial usage and distribution for all organizations, regardless of size.

But why do we have to care?

Extremely long context window may be a good thing, in theory. Private companies (Google, Cohere, OpenAI) but also the Open Source community have busted their skills to get the best out of it.

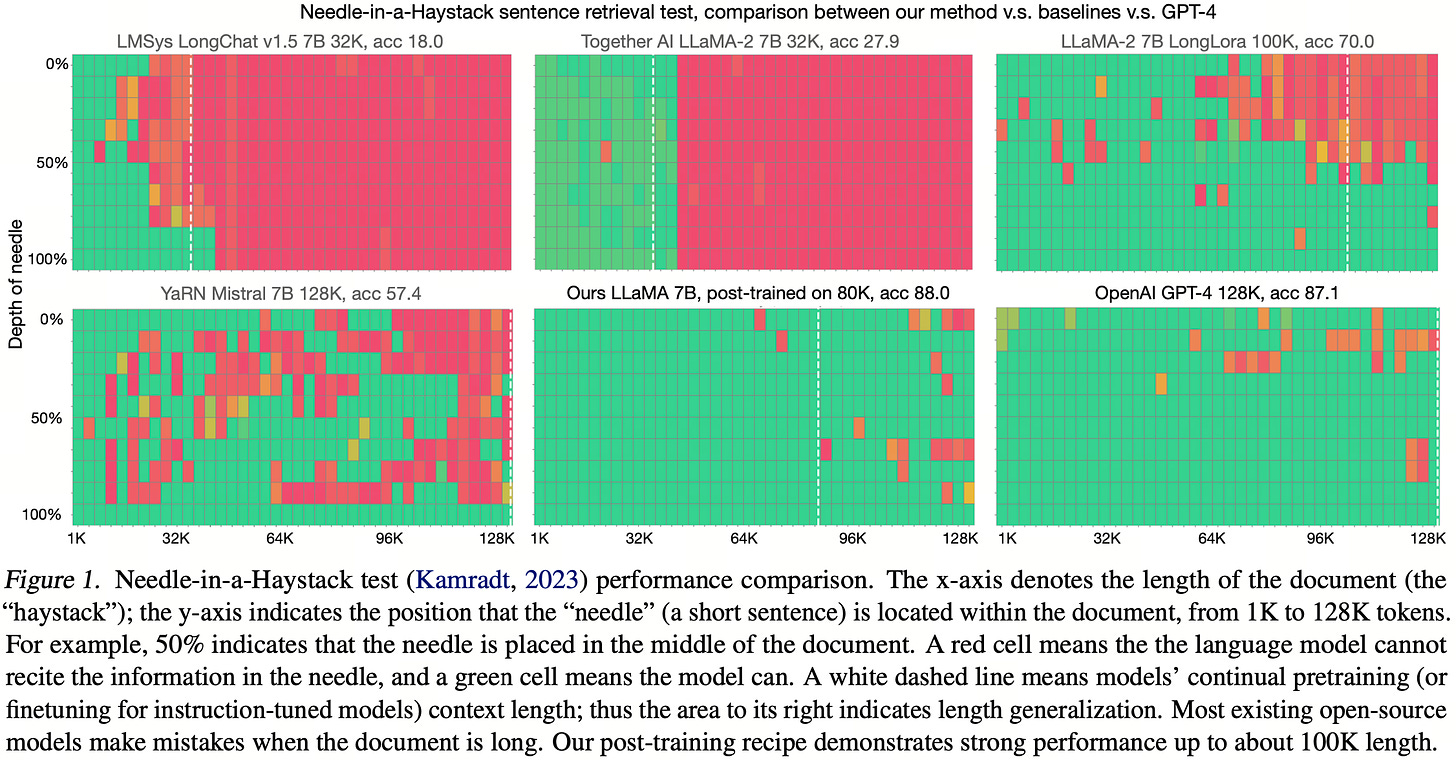

For example, Long-Context-Data-Engineering implemented the paper (Yao Fu, Rameswar Panda, Xinyao Niu, Xiang Yue, Hannaneh Hajishirzi, Yoon Kim and Hao Peng. Feb 2024. Data Engineering for Scaling Language Models to 128K Context) into a real world problem solving solution .

To cut it short, even Open Source can now provide up to 90% accuracy in information retrieval across documents up to 128k tokens… and this is Awesome!

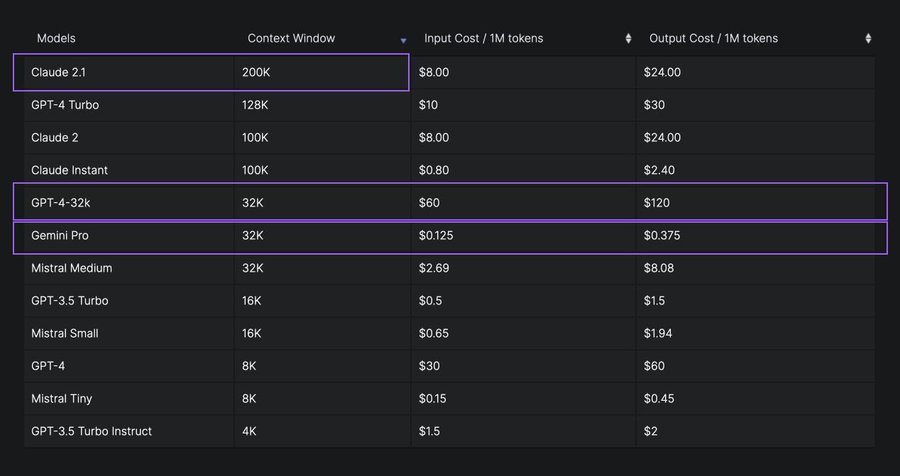

But what about the costs? I am using an amazing post on X for the Context Window & Cost Comparison for LLM models by

where she states:- Claude Instant appears to be the most cost-effective for its context window size, offering a 100K context window for only $0.80 per 1M tokens. But the performance is similar to GPT-3.5 (16K), so it can be a good alternative for simpler tasks and when you need to work with longer text. I'm guessing Gemini Pro 1.5 will become the most cost-effective one once they make it generally available as it's anticipated to have similar capabilities to GPT-4 Turbo, 128K context, at a low price.

- Generally, there seems to be a trade-off between context window size and cost; larger context windows tend to be more expensive, clearly visible for Mistral models. (with the exception of GPT-4 Turbo)

So, do you think is normal to search across 200k tokens every time you want to do a Retrieval Augmented Generation with your preferred Language Model?

I think it is not normal at all

Honestly you don’t need a Ferrari to go from A to B: you need a really normal car, or even a bike. The same way what is relevant for any business ready AI application are 2 main factors:

the accuracy of the result

the completeness of your data sources

Here lies what I think is the biggest mistake in the general understanding of the generative AI landscape, at least for people like me: we are enthusiasts, we like the innovation, but too often we want to opt for a plug-and-play solution… that is a dangerous shortcut.

I believe we all must work on 2 fronts:

enrich our data pipeline: we can now ingest documents into vector databases, but we must not forget that sometimes a keyword search is all you need - and for that you need a normal database, even a pandas dataframe is enough

test the limits of our LLM. Honestly Small Language Models are really good now. SLM are models below 4B parameters, models that ca run on consumer hardware without a GPU or simply on a mobile phone.

Gemma-2B, Cosmo-1.8B, Quyen-1.8B (or Qwen-1.8B) are all amazing candidates that can cover a huge amount of NLP tasks, without paying a penny.

For example both Gemma-2B (2.51 Billion parameters) and Quyen-1.8B have 8000 tokens as context window, Cosmo-1.8B has 2000 tokens context window: it may be worth for us to test the capabilities of these models in terms of finding a Needle in a Haystack.

Why? Because I believe that a good semantic+keyword search with good candidates, maybe with a good summary as additional context, can be more than enough to provide the ground Truth for our AI applications. And believe me, 8k tokens are really a lot!!!

Conclusions: Don't just take my word for it...

Like every week I am giving out the access to one of my articles on Medium. They usually are behind the paywall… they are not for you!

So, don’t just take my word for a better Data pipeline enrichment, or about the power and limits of Small Language Models. Read my article and you will find there highlights an example of keyword search and semantic search.

This is only the start!

Hope you will find all of this useful. Feel free to contact me on Medium.

I am using Substack only for the newsletter. Here every week I am giving free links to my paid articles on Medium.

Follow me and Read my latest articles https://medium.com/@fabio.matricardi